By HUGH HARVEY, MBBS and SHAH ISLAM, MBBS

AI in medical imaging entered the consciousness of radiologists just a few years ago, notably peaking in 2016 when Geoffrey Hinton declared radiologists’ time was up, swiftly followed by the first AI startups booking exhibiting booths at RSNA. Three years on, the sheer number and scale of AI-focussed offerings has gathered significant pace, so much so that this year a decision was made by the RSNA organising committee to move the ever-growing AI showcase to a new space located in the lower level of the North Hall. In some ways it made sense to offer a larger, dedicated show hall to this expanding field, and in others, not so much. With so many startups, wiggle room for booths was always going to be an issue, however integration of AI into the workflow was supposed to be a key theme this year, made distinctly futile by this purposeful and needless segregation.

By moving the location, the show hall for AI startups was made more difficult to find, with many vendors verbalising how their natural booth footfall was not as substantial as last year when AI was upstairs next to the big-boy OEM players. One witty critic quipped that the only way to find it was to ‘follow the smell of burning VC money, down to the basement’. Indeed, at a conference where the average step count for the week can easily hit 30 miles or over, adding in an extra few minutes walk may well have put some of the less fleet-of-foot off. Several startup CEOs told us that the clientele arriving at their booths were the dedicated few, firming up existing deals, rather than new potential customers seeking a glimpse of a utopian future. At a time when startups are desperate for traction, this could have a disastrous knock-on effect on this as-yet nascent industry.

It wasn’t just the added distance that caused concern, however. By placing the entire startup ecosystem in an underground bunker there was an overwhelming feeling that the RSNA conference had somehow buried the AI startups alive in an open grave. There were certainly a couple of tombstones on the show floor — wide open gaps where larger booths should have been, scaled back by companies double-checking their diminishing VC-funded runway. Zombie copycat booths from South Korea and China had also appeared, and to top it off, the very first booth you came across was none other than Deep Radiology, a company so ineptly marketed and indescribably mysterious, that entering the show hall felt like you’d entered some sort of twilight zone for AI, rather than the sparky, buzzing and upbeat showcase it was last year. It should now be clear to everyone who attended that Gartner’s hype curve has well and truly been swung, and we are swiftly heading into deep disillusionment.

Still, the venue was well decorated — the shiny booths and showcase were surrounded by numerous well-coiffed hedges — an unintentional reflection on the profession’s ability to beat around the bush with a hedge when it comes to forming a diagnostic conclusion. Speaking of beating and bushes, let’s dive straight in to the main themes that emerged in the AI showcase this year:

We have reached market saturation for diagnostic AI startups

On the Thursday during the conference, Dr Harvey gave a talk on ‘How to build an AI company’. The title of the talk had already been chosen a year in advance by the moderator (the pre-eminent Dr Saurabh Jha). There was only one possible conclusion — DON’T. It’s no longer worth it. The lowest branches of the diagnostic tree are bare, with every low hanging fruit having been picked, often multiple times. The greatest challenge, rather like Indiana Jones in The Last Crusade, is picking the right chalice. This becomes difficult when there are so many Chest X-ray, CT head and lung solutions to choose from.

The melange of startups was made even more dense this year with the arrival of a new Asian cohort. A team from Chinese tech giant Tencent won the RSNA kaggle competition, and Lunit and Vuno were back again proclaiming traction in their local markets with their broad, but overlapping suite of AI products. One company, JLK Inspection, not previously on anyone’s radar that we spoke to, spent almost $1million on their flashy, spacious booth. Not content with one or two algorithms — they showcased ALL the algorithms the other vendors were showing, and more. Retinal scanning, stroke, mammo, pneumothorax, bone age — you name it, they got it (37 solutions for 14 body parts)— or so they claimed. Backed by the Samsung Medical Hospital, and with over 50 employees, they certainly looked like the real deal. Their marketing was slick, but it was their stats that gave the game away. No-one takes an AUC of 0.99 seriously anymore, especially if all your algorithms curiously have the same accuracy. Nevertheless, the Korean FDA has let them all through, so the proof will be in the pudding.

Positive signs for the sector were still evident, however — Aidence announced a well deserved deal with Affidea (Europe’s largest private radiology service provider) for their lung screening solution, joining Icometrix’s comprehensive neurodegenerative suite who were also snapped up by Prof Illing and his team last year (Affidea also announced they are rolling out GE hardware across Europe). Other diagnostic fruit are less bitten — automated ultrasound diagnostics for instance, which Koios certainly seems to be leading at in the breast and thyroid lump-measuring realm, with a strong machine-embedded partnership with GE, and prospective studies in the pipeline. AIdoc continue to forge deals with their head triage solutions, their new relationship with Philips being a key driver in credibility, alongside MaxQ AI, Quibim, Riverain and Zebra.

Several startups focussed on biomarker-driven insights. Quibim are one to watch in this space, with a long history of publications and partnerships. Similar competitors such as CorTechs Labs, Perspectum Diagnostics and Healthmyne offer a suite of different modality and disease imaging biomarkers. However, these tools are not what your average radiologist is looking for — adding more data and charts to look at during a day’s work is not going to drive productivity. We struggle to see a solid market for those algorithms that provide longer term predictions, such as 5 year risk of Alzheimer’s , or 10 year risk of cancer recurrence— what are we supposed to do with this information, and how much is a prediction worth paying for? The market for these solutions is in pharma and clinical trials, and immunotherapy or oncology research, so perhaps RSNA (being largely a payer-driven conference) is the wrong place to be?

Serious rumours abounded that some of the bigger AI startup names were in financial trouble, backed up by reports of large layoffs. There’s no smoke without fire, so at a guess, this is likely due a lack of investor willingness to prop up companies any longer without demonstrable traction and annualised recurring revenue (ARR). VCs don’t want incremental gains, they want 10x gains. And this leads us on to our next point…

Show us the money

While it may be true that radiology is a multi-billion dollar global industry, so far not one of those billions has made it into the coffers of startups through revenue-driven services. Yes, some are gaining traction, but the uptake is painfully slow. This is not your usual consumer tech market – this is medicine, where procurement cycles take eons, and without evidence of downstream cost efficiencies or productivity boons you stand little chance of making a quick buck. The vast majority of startups are backed by silicon-valley style money, tranches of which come in the tens of millions at a time. Unfortunately for them, these are not the kind of figures hospitals pay out for unproven promises of automated AI nirvana, even if it can potentially augment the large volume, mundane tasks.

One startup with happy customers is Viz.ai, a company focussed on large vessel occlusive stroke. The clever trick here is that the customers aren’t radiologists at all (neither are the company founders)– the consumers are neurointerventional/stroke teams who get a shiny app which pings them whenever a possible clot is detected, complete with pictures straight from the CT scanner. ‘Time is brain’ has now become a marketing slogan. Which only raises the question – is the only way to make money with AI to remove radiologists from the loop? The efficiency is clear – surgically bypassing the radiology read shortens time-to-needle and improves patient outcomes. The radiologist has not been replaced per se, they are just no longer central to this critical care pathway, their report essentially meaningless post hoc. It doesn’t even matter how accurate the clot-detection algorithm is – the neurointerventionalists can make their own mind up using the images on their iPhone. Heck, even if the scan is called false positive, they can always pick up a juicy fee for an easy consult in the middle of the night, if they’re so inclined.

Other use-cases do not provide such a clear return on investment, because ‘Time is brain’ unfortunately doesn’t translate to many other parts of the body. Pneumothorax perhaps, but only if the AI is embedded in the scanner for immediate flagging, again, not to the radiologist, but to the radiographer taking the picture (aka, the GE Critical Care Suite approach). It is now apparent that prioritising non-urgent findings to a radiologist doesn’t really add much, especially if their turn around times are sub 30 minutes already. In fact, prioritising non-urgent general imaging may even have a negative effect — radiologists may suffer in accuracy the further down the priority list they go, as their expectation of actually finding pathology decreases.

Some startups theorise that their AI can prune out thousands of normal CTs, CXRs or mammograms from radiologists reading lists by performing ‘hard triage’ at specificity thresholds nearing 100%, but the reality is that no-one is yet prepared to let the machines take over, even for a little bit. One reason may simply be that American radiologists make money per scan read, and reducing this number by 10% or more isn’t likely to go down too well. The opposite is true in state-funded health systems where radiologists aren’t paid per case, which is why ‘hard triage’ may be more palatable in the EU for instance, presuming the evidence base catches up. Many of these technologies are pitching to wealthy economies, where they are being compared to radiologists, but are better served in the emerging markets where there are none. You can’t replace what is missing, and AI might well fill the gap there.

The largest benefit of AI will need to be demonstrated by improved overall diagnostic accuracy of non-time-critical pathologies. Consistently finding pathologies that have a significant downstream cost when not detected on initial imaging (non-critical findings such as breast cancers and lung nodules) is the most common startup offering. Of course, to sell this effectively, we need to see the evidence, and this requires prospective studies on broad, generalisable data to convince payers of the value. Unfortunately for startups, prospective studies take time, and some may not have the financial runway to wait for the results. The whole AI industry is still riding on retrospective studies — allowing for FDA clearances only, and not approvals, akin to letting drugs on market after Phase II trials only. This crucial difference means that there is a significant gap to be jumped in terms of both clinical and economic evidence to show that any of this stuff makes a dent in healthcare at all. Very few vendors showcased the potential economic advantages of their software, and none that we saw had published prospective randomised control trials. On top of the need for proper studies, there’s the small matter of creating new CPT codes for the US billing machine — and until these are formalised, no-one is yet clear how much to pay for an adjunctive AI read yet…

For our money, the well-published (and non-VC funded startups) stand the best chance of survival. Indian company, Qure.ai (image above), certainly fit this bill (32 peer-reviewed papers to date helps cut through the hype), signalling an impressive dedication to the scientific process. They have a world-class CXR TB detection algorithm, having recently proven themselves worthy equals in an open independent test against South Korean competitors, Lunit, which was published in Nature. Also, with their qCXR and qER suites, Qure offer the most comprehensive amount of pathology coverage for CXR and CT head analysis, the latter appearing in The Lancet, a first for deep learning.

In comparison, no-one even blinked an eye during the week as Google revealed the results of its work on the ChestX-ray14 set with Apollo Hospitals. This wasn’t surprising, as those in the know have already seen better — four pathologies is vastly inferior to many of the existing CXR start-ups and academic literature. (A deeper dive into the paper unearths some poor methodology, including using the same data superset for training, testing and validation. Tsk tsk.) Still, they relabelled a significant portion of the ChestX-ray14 dataset and made it available for free, which is nice.

The future ain’t so ‘appy for the app stores

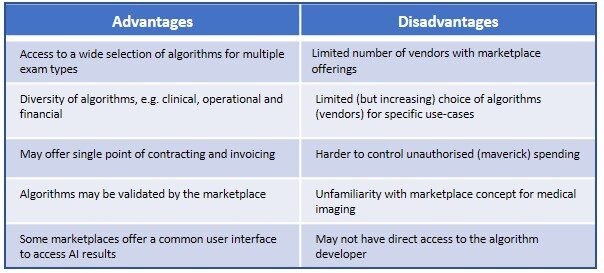

For those radiologists and hospitals wondering how to access the smorgasbord of algorithms on offer, there are a few marketplaces or ‘app stores’ available. You can take your pick from Nuance, Incepto, Envoy, Wingspan and Blackford which all offer integration platforms featuring many of the startups’ algorithms. Arterys has also recently pivoted into this space, showcasing someone else’s algorithm for the first time (a fracture detection tool from French unknowns Milvue). If you want to know about the different features of each of the app stores we recommend the well-researched selection guide from Signify Research. Some come with their own viewers, most come in cloud form, and if you ask nicely, offer free time-limited trials.

While it might make sense for early adopters to go for an AI app store instead of choosing from hundreds of startups to partner with, RSNA attendees who visited the OEM and major PACS providers booths upstairs will have seen plenty of slick AI integration demos, making us wonder why there are even independent third party app stores at all. GE has the well-marketed all-singing and dancing Edison, Fuji has REiLI, Siemens, Philips and Nuance all have digital marketplaces too. Not only do these offer many of the algorithms available direct from startups or via the independent stores, they also come bundled within recognisable branded PACS and viewers. Intelerad impressed us most with their new AI hub streamlined integrations (complete with confusion matrices for the stats geeks out there), which includes offerings from Envoy, Blackford and AIdoc as well as a tempting 12 month free trial of all of Zebra’s algorithms through their AI1 platform. So, if you are looking to procure a new PACS in the next year or so, you’ll be offered AI marketplaces anyway, and we see no reason to go direct to a third party store, unless you are the type of person who likes to be first in line at the Apple store whenever a new iPhone is released. Where the AI platforms can make a dent is in white-labelling their software to the big fish.

Of course, GPU giants NVIDIA were at the show too, offering forward-thinking radiology departments the opportunity to build their own AI and deploy it locally through their Clara suite (notably with no mention of the medical device regulatory hurdles involved. Who needs the FDA anyway?). The thing is, you don’t need GPU to run diagnostic inference, you only need it for training, so unless you have a team of data scientists and radiologists with spare time to label vast quantities of data, this is likely a pointless endeavour, designed only to bring in continued revenue to the GPU manufacturer as their sales to bitcoin miners continue to shrink. Or it could be an attempt to make you think twice about sending all your data and processing needs into the hands of the giants in the cloud, where NVIDIA dont have a controlling stake. And don’t get us started on the pitfalls of federated learning (training on data from multiple sites) — the chances of two hospitals labelling their data in the same way locally is miniscule, let alone several hospitals. The AI startups naturally all use their DGX hardware already— we are yet to come across a startup who applied to the NVIDIA Inception program, and wasn’t accepted — so the market here provides diminishing returns for the GPU sales people.

The time has come for structured reporting

Prior to the conference we predicted several things, including that structured reporting would see a surge in interest, particularly because AI development and testing largely rests on the accuracy of labels, and structured reporting is the only way to create these labels prospectively at source.

This prediction was proven correct, at least twice — firstly the winner of the prestigious Fast5 session was a talk by Dr. Martin-Carreras on how encoding patient-friendly translations into radiology reports provides a whole new level of insight and reassurance for patients who struggle with complex radiological terminology. Secondly, there was huge interest in German startup Smart Reporting, led by Prof Sommer, the only startup at the show who appeared to have solved the perennial problem of getting radiologists to stick to a didactic reporting structure with their flexible universal reporting engine. Their tiny booth was the antithesis of hype (well, technically they aren’t an AI company). Prominently featured partner showcases in both the Siemens and GE Edison demos upstairs helped get their vision of VR-driven visually rich structured reports across, which also enable seamless integration of AI outputs from multiple vendors. One-click interlanguage translation, data-minable codified reports, templates based on expert guidelines (including the RSNA RadReports), with the option to create bespoke reports — all bases are covered. With no other startup competitors, things certainly look exciting for this company.

Scientific support for structured reporting was also a theme in the academic sessions. An excellent presentation by Dr Vosshenrich from Basel showed that structured reporting decreased reporting variation, and led to a 50% decrease in character count (which is what referring clinicians want, not lengthy prose). Structured reporting is also great for producing labelled data for AI training, as recently published by Dr Pinto dos Santos. Let’s hope the RSNA structured reporting subcommittee, led by Dr Heilbrun were taking note!

If you need to label retrospective data there were startups for that too. Segmed and MD.ai offer data labelling services, and were seeking trainees looking to earn on the side via a web-based platform, and Israeli-based Agamon aimed to please administrators by improving operational, business and clinical performance using NLP to drive insights.

AI-enhanced image acquisition shows promise

While clinical decision support is an oversaturated market, there is massive room for growth in the image acquisition sector. Post-processing of low dose or sparsely acquired medical images has been a field of research since before deep learning came out, but now newer AI techniques have made it to the marketplace. Hospitals and imaging centres looking to increase patient throughput will be interested in the potential to significantly reduce scan time, and radiologists and medical physicists who care about radiation dose should definitely want to take a peak.

Two companies in this sector at RSNA caught our attention. Subtle Medical won the first FDA clearance for AI-augmented PET/CT studies just in time for the conference, and they demonstrated a clear economic and workflow benefit in terms of a fourfold decrease in scanner bed time and up to a 100x decrease in dose without compromising image quality. They also claim it takes less than 24 hours to deploy, which certainly beats many other AI applications. Algomedica showed off their PixelShine algorithm for denoising CT studies, with clear image quality maintained at less than standard CXR dose for a CT lung scan. This seems to be well-placed for the upcoming onslaught of lung cancer screening programmes across the globe. Algorithms such as these should see their way eventually directly into OEM hardware, it’s only a matter of time.

These offerings come with a small caveat — you may want to test locally for quality by running a pre/post comparison, and ensure the software is compatible with your fleet of scanners before purchase.

So what is the best route to market for radiology AI?

With such a variety of AI algorithms on offer, it’s not surprising that many radiologists feel overwhelmed, and hospitals unsure about how to engage with the growing sector. Big name hospitals across the US are both signing up to several vendors and embarking on ambitious internal IT projects to build their own versions in-house. But where does this leave the vast majority of smaller hospitals across the globe, and those with less of a budget for shiny new tech (i.e. the majority of the global market)?

Our prediction is that, for diagnostic algorithms at least, they won’t have to buy. Yes, you heard that correctly. General hospitals don’t need to buy any radiology AI at all. The overheads in purchasing, deploying, implementing and maintaining a suite of algorithms will be too much for most radiology departments, and most simply do not have the time or inclination to perform multiple business cases, purchasing procurements and contracting, even with a third party app store. Any AI they do want will arrive in the next couple of years within their PACS anyway, and even then, the jury’s out on just how well it will integrate with their legacy systems.

Enter remote reporting services, where the onus is on the teleradiology provider to ensure rapid turn-around with high accuracy, meaningful reports at a competitive price. We believe the future is decentralised, with images being reported remotely, 24/7, in a human-machine hybrid model. All of the teleradiology providers we spoke to at RSNA were actively engaging with the AI vendors, scoping out who to utilise in their enterprise, looking to improve their productivity, increase their revenue through workflow improvements, while maintaining or bettering their accuracy. Teleradiology companies already have significant IT infrastructure and cloud-based remote services designed for fast image transfer and reporting, a set-up which is far more scalable and amenable to AI deployment than multiple local installs. Additionally, they handle a vast array of images, from greater geographic regions compared to single site hospitals, meaning that AI companies get to work with much more clinically useful and statistically powered data, creating a powerful feedback loop that blows away any current validation and post-market auditing models. Teleradiology companies who design their IT infrastructure and reporting pathways around AI, and actively engage their radiologist workforce in working alongside it, are going to leap far ahead. They also have the capital and incentive to do so, which is more than we can say for the average small town radiology department.

If you’re not convinced, and the moves from Affidea (previously mentioned above) don’t intrigue, then we point to new teleradiology outfit Nines from Silicon Valley, who are building a remote reporting service from scratch with AI at its heart. The advisory team are AI luminaries (Prof Langlotz and Dr Lungren from Stanford, Keith Bigelow formerly from GE). Clearly they also share the same vision of a remote human/machine service, where AI sits in the centre, not as a peripheral adjunct bolted on in an attempt to keep with trends. Hospitals struggling with workload and burnout across the world will be able to send their images remotely to services such as this, not just for AI analysis by well-generalised algorithms, but also an expert human read alongside it (human + AI is better than just AI, remember). This seems eminently more sensible than attempting to deploy countless diagnostic algorithms locally, fiddling around in app stores or attempting to build your own, especially for remote and rural locations.

Despite the expected downturn in hype, the promise of a more automated future still remains an alluring possibility— and, if AI finally starts to make significant headway into human-level decision making, it is the teleradiology providers who have laid a solid foundation for delivery of AI into healthcare that will reap the benefits as we enter the anticipated plateau of productivity.

The future of diagnostic radiology is remote AI-augmented reporting… if the startups can survive long enough to generate sufficient prospective evidence.

You heard it here first, folks!

Dr Harvey is a board certified radiologist and clinical academic, trained in the NHS and Europe’s leading cancer research institute, the ICR, where he was twice awarded Science Writer of the Year.

Dr Islam is a board certified academic radiologist sub-specialising in imaging of the brain and spine. and is currently completing his PhD at Imperial College London, applying deep learning to advanced brain imaging to help characterise and prognosticate brain tumours.

This post originally appeared on Hardian Health here.

Categories: Uncategorized