Dor Skuler is CEO of Intuition Robotics the maker of ElliQ — a remarkable AI robot that is a companion for seniors. I had a lot of fun meeting ElliQ and asking Dor about how she works. This is a wide-ranging interview with Dor and with ElliQ. She tells us about Florence Nightingale, what Dor should do with his kids and really gives you the idea of how she relates to seniors. There’s a ton of capabilities–you really have to watch the whole thing–but the end result is that Medicaid plans including NY and Washington State have determined that ElliQ allows people to stay at home longer and saves $$ on nursing home care. A fascinating view into the present and the future of how AI and robotics is changing the world–Matthew Holt

Don’t Bury The Lead – AI Assisted Measures of Thymic Health Point to a “Fountain of Youth.”

By MIKE MAGEE

In its final summary of the landmark paper in Nature this past month, the authors led with this statement: “This study underscores the highly personalized nature of thymic health and emphasizes the previously unrecognized possible critical role of maintaining thymic health to preserve an agile, adaptive immune response that will accommodate long-term well-being and longevity.”

The articles clinical significance was rapidly rebroadcast by a range of popular science publications like Scientific American. Its March 18th headline read “This overlooked organ may be more vital for longevity than scientists realized.” Mass General publications trumpeted, “Long Dismissed in Adult Health, the Thymus May Be Critical for Longevity and Cancer Treatment.” And global outlets went a step further with “Once dismissed as biologically obsolete after adolescence, the thymus is now being reclassified as a central regulator of immune aging, with new evidence linking its health to survival, cancer resistance, and how the human body ages itself.”

In their own Abstract, the authors of the Nature publication were somewhat more reserved, and yet the message is still remarkably consequential. They write, “These findings reposition the thymus as a central regulator of immune-mediated ageing and disease susceptibility in adulthood, highlighting its potential as a target for preventive and regenerative strategies to promote healthy ageing and longevity.”

But what intrigued me in the case above was barely mentioned by reviewers so excited by the primary clinical findings. My question was, “How did they measure thymic functionality?” The short answer is, they measured it with the help of an AI deep learning system.

As the authors explained, “In this study, we investigated the impact of thymic functionality, here called thymic health, in adults… For quantification of thymic health, we developed a deep learning system using an independent dataset of 5,674 individuals to determine compositional radiographic characteristics of the thymus as a proxy for its functionality. The system takes a CT scan as input and provides the automatic continuous thymic health estimate as output….We applied the system to prospectively collected data from a total of 27,612 individuals from two cohorts, including 2,581 participants in the FHS and 25,031 participants in the NLST… For outcome analyses, participants were categorized as low, average or high thymic health based on the bottom 25%, middle 50% and top 25% of the population.”

This new methodology to demonstrate different levels of thymic functionality turned out to be groundbreaking when cross-referenced with decades long longitudinal databases. Association with cardiovascular disease and lung cancer; history of smoking, obesity, and high HDL levels; disabilities, morbidity and mortality; sex and age all reinforced that prolonged functionality of the thymus correlated with both health and longevity.

Continue reading…Philippe Pouletty, Carvolix

Philippe Pouletty is a physician who’s an inventor, French venture capitalist, and the founder of Carvolix. Carvolix is a medical technology company that is introducing AI into cardiology. Before Carvolix, Philippe was the founder of Abivax, which makes drugs for chronic inflammatory diseases like ulcerative colitis. He’s been working on helping French medical products develop before having to sell to bigger US companies, and Carvolix is the latest. It’s an AI system that guides cardiologists and a robot that places heart valves. It’s of particular interest to me, as I need a new heart valve. I had a long and interesting discussion with Philippe about the future of cardiology, particularly heart valve replacement, and also about their upcoming product, a robot to bust brain clots–Matthew Holt

Tom Kelly, Heidi Health

Tom Kelly is the CEO of Heidi Health, another of the many ambient AI scribes that is spreading its wings to other roles, including bringing its own AI Open Evidence competitor! He calls it an AI care partner. Heidi started in Australia, and quickly moved to the UK and Canada, but now are in over one hundred countries. More recently they have come to the US and have now four major health systems and a lot of other mid market users. Tom think’s Heidi will soon do all the “work around the work”, and he doesn’t think it has to be deeply integrated with the EMR. He sees that as a superpower as doctors don’t want to be in the record. Is he right? Are scribes and ambient AI going to be separate? Does the scribe have to be a medical device, as it does in the UK? Will patients use it? Lots of questions about the future and Tom has lots of answers. Some might even be right!–Matthew Holt

Ian Shakil, Commure

Ian Shakil is the Chief Strategy Officer of Commure, the AI platform being used by HCA, Tenet and others. He came to Commure via its acquisition of Ambient AI vendor Augmedix, and there are a lot other other new acquisitions within Commure (Athelas, PatientKeeper, Memora Health, Rx Health etc). We dived in not only about what Commure does but the big question of how does a client like HCA or Tenet decide what Commure does, vs what Meditech does, vs what Google does vs what they do internally. We also (sorta) looked into the various criticisms (basically all from Sergei Polevikov!) of what Commure and its main funder General Catalyst are up to and what is happening at Summa Health the hospital in Ohio that GC bought. He also says the good experience from AI will come to help patients this year, and I’ll be holding him to that!–Matthew Holt

Beyond Generative AI

By BENJAMIN EASTON

Healthcare’s administrative burden is not a documentation problem. It is a workflow problem. Healthcare’s next leap depends on agentic systems that can actually do the work

Over the past year, healthcare organizations have widely adopted generative AI for an array of documentation-related activities such as drafting appeal letters, producing patient-friendly summaries, and even assisting with administrative writing. While these tools have improved how information is created, healthcare’s administrative bottlenecks (e.g., prior authorizations, benefit verification, denial management, clinical trial enrollment), are not caused by a lack of text. They are caused by fragmented systems, manual tracking, payer variability, and workflow handoffs that require continuous monitoring and intervention.

If generative AI helps write the email, agentic systems send it, track it, escalate it, reconcile the response, and close the loop.

That distinction is healthcare’s next inflection point.

From Content Generation to Workflow Execution

An agentic system is not just a chatbot layered onto healthcare workflows. It is a coordinated set of AI-driven agents designed to:

- Pull structured and unstructured data from EHRs, payer portals, labs, and internal systems

- Apply payer-specific policy logic

- Validate documentation requirements

- Submit transactions through the appropriate channel

- Monitor status changes

- Trigger follow-up actions

- Escalate exceptions to humans

- Log every action for audit and compliance

Behind the scenes, these systems rely on rule engines, structured clinical mappings, secure API integrations, and event-driven automation frameworks. They continuously re-evaluate state changes (e.g., a new lab result, a status update from a payer portal, or a missing documentation flag) and dynamically adjust next steps.

This is not robotic process automation replaying keystrokes. It is intelligent orchestration across disconnected systems.

Continue reading…Preeti Bhargava, Arintra

Preeti Bhargava is CTO of Arintra. She is the living embodiment of my crack that the smartest people in the world spent the 2010s convincing people to click on ads and now spend their time figuring out how to bill payers more for providers doing the same work. Arintra is in the RCM business. It uses AI to read the medical chart and automatically generate claims using fewer human coders, and generating up to a 5% revenue uplift for one customer, Mercy Health. Of course those paying those claims may have noticed, so we had a chat about the emerging AI RCM arms race–Matthew Holt

Ratnakar Lavu, Elevance

Ratnakar Lavu is the Chief Digital Information Officer of Elevance, the holding company of Blue Cross and Blue Shield plans in some 14 states (usually called Anthem Blue Cross). We had a great chat about what the priorities are for Elevance, and Ratnakar’s goal is to use tech to make the member experience simple. They are leaning heavily on AI and chatbots to help members inform themselves, and to help providers speed up approvals for prior auth et al. We also discussed how they work with vendors and how they help them scale.–Matthew Holt

Liberal Arts Education As a Counterbalance To Trumpian AI

By MIKE MAGEE

What’s wrong in the social science realm of health? Consider for example the mental health crises affecting teens across the nation, or the sharp decline in relationships and child bearing in young adult men and women, or the attack on vaccine policy by the wayward Kennedy, or the attempted dismantling of ACA health insurance coverage for millions, or the outright cruelty of ICE agents toward citizens and legal aliens, or the callous attitude toward Middle East casualties of soldiers and civilians by the President and the “Secretary of War”… and I could go on.

How should our nation begin to address these grievances? With our grandchildren either in or fast approaching higher education, I’ve been making a related case (as I see it) for the value and importance of a liberal arts education. In a strange way, Trump, in his attacks on the law and democracy, has instigated a resurgence of interest in history, philosophy, religion, political science, literature and the arts – even in this age of fantastical AI exuberance.

My own alma mater has been steadfast in its vision. As they state on their own website, “The liberal arts education at Le Moyne is rooted in the Jesuit tradition, which emphasizes the education of the whole person and the search for meaning and value as integral parts of an intellectual life. This commitment to a liberal arts education allows students to develop a broad range of skills and knowledge, fostering ethical leadership, service, and a commitment to social justice. The college’s Core Curriculum is central to its mission, ensuring that all students receive a thorough education in the liberal arts, which includes knowledge across multiple disciplines and the confidence to engage in intellectual inquiry as members of a global community.”

In simpler terms, LeMoyne’s front page headlines “We strive for greatness always through the eyes of goodness.” I thought of this last week as I watched James Talarico’s speech accepting his Democratic Primary nomination for Senate in Texas. In part explaining his convincing victory numbers as a result of his ability to attract a large turnout of Democrats, Independents, and Republicans, he issued what will certainly be his rallying cry: “The people of this state have given this country a little bit of hope, and a little bit of hope is a dangerous thing.”

Who is in danger? Talarico has tagged not only billionaires, but especially Christian Nationalists who he says “divide us by party, by race, by gender, by religion so that we don’t notice that they’re defunding our schools, gutting our health care and cutting taxes for themselves and their rich friends. It is the oldest strategy in the world: Divide and conquer. But we will not be conquered.”

This week CUNY Political Scientist, Peter Beinart, laid out a remarkable opinion piece in the New York Times, leaning heavily on liberal arts to make a convincing case against empire building and king Trump. In opposing national sovereignty and international law conventions, he spotlights the President’s source of guidance – “My own morality. My own mind. It’s the only thing that can stop me.”

Beinart bolsters his case against Trump by digging deep into our own history, political science, literature and religion. Included in the journey are President William McKinley (intent on Caribbean Empire building), and his opponent, William Jennings Bryan, who claimed McKinley’s action “is not a step forward toward a broader destiny; it is a step backward, toward the narrow views of kings and emperors.” John Quincy Adams appears in 1821 stating such purposeful aggressions would undermine “the fundamental maxims of American policy (and) would insensibly change (democratic practice) from liberty to force.”

Others come forward as well including Frederick Douglass, Henry David Thoreau, Ralph Waldo Emerson, W.E.B. Du Bois, John Kenneth Galbraith. Taken into account Beinart’s impressive essay and Talarico’s acceptance speech, side by side in a short 24 hours, reminds us all that the soul of our democracy requires health, unity, and the capacity to awaken “our better angels.”

To paraphrase the LeMoyne motto, our greatness must flow from our goodness. The core of a well educated electorate is knowledge, wisdom, and values. In its absence, we are left with ignorance, greed, and hatred.

Mike Magee MD is a Medical Historian and regular contributor to THCB. He is the author of CODE BLUE: Inside America’s Medical Industrial Complex. (Grove/2020)

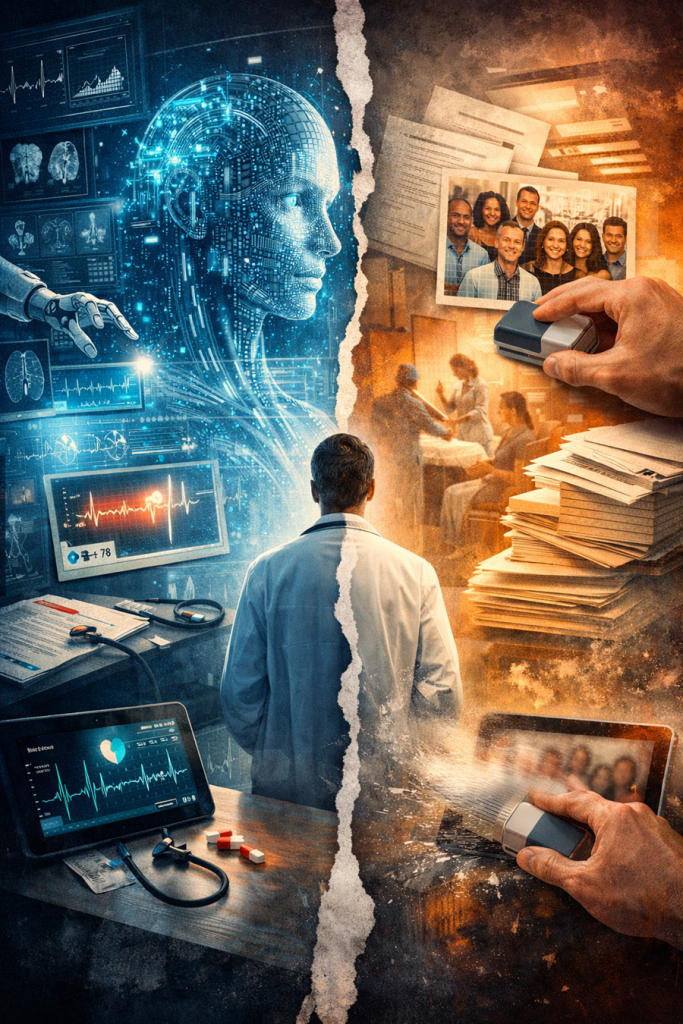

When Artificial Intelligence Starts Rewriting Reality

By BRIAN JOONDEPH

Artificial intelligence is quickly becoming a core part of healthcare operations. It drafts clinical notes, summarizes patient visits, flags abnormal labs, triages messages, reviews imaging, helps with prior authorizations, and increasingly guides decision support. AI is no longer just a side experiment in medicine; it is becoming a key interpreter of clinical reality.

That raises an important question for physicians, administrators, and policymakers alike: Is AI accurately reflecting the real world? Or subtly reshaping it?

The data is simple. According to the U.S. Census Bureau’s July 2023 estimates, about 75 percent of Americans identify as White (including Hispanic and non-Hispanic), around 14 percent as Black or African American, roughly 6 percent as Asian, and smaller percentages as Native American, Pacific Islander, or multiracial. Hispanic or Latino individuals, who can be of any race, make up roughly 19 percent of the population.

In brief, the data are measurable, verifiable, and accessible to the public.

I recently carried out a simple experiment with broader implications beyond image creation. I asked two top AI image-generation platforms to produce a group photo that reflects the racial composition of the U.S. population based on official Census data.

Continue reading…