1986 was a great year. In the heyday of the worst-dressed decade in history, the Russians launched the Mir Space Station, Pixar was founded, Microsoft went public, the first 3D printer was sold, and Matt Groening created The Simpsons. Meanwhile, two equally important but entirely different scientific leaps occurred in completely separate academic fields on opposite sides of the planet. Now, thirty two years later, the birth of deep learning and the first implementation of breast screening are finally converging to create what could be the ultimate early diagnostic test for the most common cancer in women.

A brief history of deep learning

1986: In America, a small group of perceived agitators in the early field of machine learning published a paper in Nature entitled “Learning representations by back-propagating errors”. The authors, Rumelhart, Hinton and Williams had gone against the grain of conventional wisdom and proved that by re-running a neural network’s output errors backwards through a system, they could dramatically improve performance at image perception tasks. Back-propagation (or back-prop for short) wasn’t their discovery (for we all stand on the shoulders of giants) but with the publishing of this paper they managed to finally convince the sceptical machine learning community that using hand-engineering features to ‘teach’ a computer what to look for was not the way forward. Both the massive efficiency gains of the technique, and the fact that painstaking feature engineering by subject matter experts was no longer required to discover underlying patterns in data, meant that back-propagation allowed artificial neural networks to be applied to a vastly greater array of problems that was previously possible. For many, 1986 marks the year that deep learning as we know it was born.

Although advanced in theory, deep learning was still far too computationally complex for computer chips to handle efficiently at the time. It took over a decade to show that commercially available processing power had finally advanced enough to allow significant increases in neural network performances. This was beautifully demonstrated by Alexnet in 2012, an eight-layered convolutional network run on Graphical Processing Units (GPUs) that convincingly trumped all previous attempts at image classification tests, beating all competition by an increase in accuracy of over 10 per cent. Since then, GPUs have been the mainstay of all deep learning research. Only more recently has research started to produce functional and clinically proven deep learning software as applied to radiology. Modern deep learning systems no longer require hand-crafted feature-engineering to be taught what to look for in images; they can now learn for themselves. In short, deep learning was finally pushed into the mainstream.

A brief history of breast cancer screening

1986: In the UK, Professor Sir Patrick Forrest produced the ‘Forrest report’, commissioned by the forward thinking Health Secretary, Kenneth Clarke. Having taken evidence on the effectiveness of breast cancer screening from several international pilot trials (America, Holland, Sweden, Scotland and the UK), Forrest concluded that the NHS should set up a national breast screening programme. This was swiftly incorporated into practice, and by 1988 the NHS had the world’s first invitation-based breast cancer screening programme. ‘Forrest units’ were set up across the UK for screening women between 50 and 64 years old who were invited every three years for a mammogram. Then in 2012, when ongoing analysis of the screening programme proved the benefit of widening the age range for invitation to screening to between 47–73 years old, the potential early discovery of the most common cancer in women was further bolstered. By 2014 the UK system was successfully discovering over 50 percent of the female population’s breast cancers (within the target age range) before they became symptomatic. Screening is now the internationally recognised hallmark for best practice, and the NHS programme is seen world-wide as the gold standard. In short, the UK has the world’s best breast cancer screening programme, born in 1986.

After the advent of digital mammography in 2000, medical imaging data became available in a format amenable to computational analysis. By 2004, researchers had developed what became known as Computer Assisted Diagnosis systems (CAD), using old-fashioned feature-engineered programmes and supervised learning to highlight abnormalities on mammograms. These systems use hand-crafted features such as breast density, parenchymal texture, presence of a mass or micro-calcifications to determine whether or not a cancer might be present. They are designed to alert a radiologist to the presence of a specific feature by attempting to mimic an expert’s decision-making process by highlighting regions on a mammogram according to human-recognised characteristics.

Problems with CAD

While traditional CAD demonstrates superior detection for breast cancers when used in combination with radiologists (‘second reader CAD’), they also significantly increase what is known as the ‘recall rate’, and therefore the cost of running screening programmes. In CAD-augmented breast screening, mammographic images are viewed by a radiologist (or two independent readers, as in the case of the UK). Readers look for signs of breast cancer, and then a CAD overlay is reviewed which indicates regions of interest as highlighted by the system, and a decision is made whether or not to recall the patient back for further tests, such as an ultrasound and/or biopsy, should there be any suspicion. The rate of recall is used as an indicator of the quality of a breast screening unit, with higher recall rates being indicative of a programme that is producing too many false positives. The sequelae of high recall rates include increased costs incurred by performing more follow-up tests. For example, Elmore et al demonstrated that for every $100 spent on screening with CAD, an additional $33 was spent to evaluate unnecessary false positive results. More importantly, a significant increase in women were undergoing undue stress and emotional worry as they feared for the worst.

The reason behind the apparent rise in recall rates relates to the statistical underpinning of the CAD outputs’ performance. These systems were shown to be highly sensitive but poorly specific (e.g. an industry leading CAD reports an algorithm-only sensitivity of 86 per cent but a specificity of only 53 per cent). This means that such systems are very good at deciding between a true positive and a false negative result, but fairly poor at distinguishing between a true positive and false positive result. Compare this with human performance alone — sensitivity and specificity of 86.9 per cent and 88.9 per cent respectively (Lehman et al), and you can begin to see why CAD hasn’t quite achieved its promise. In a seminal UK trial (CADET II), although CAD was shown to aid single reader detection of cancer, there was a 15% relative increase in recall rates between human/CAD combination and standard double-reading by two humans.

In addition to raising recall rates, CAD systems have been shown to be inconsistent and have significant differences in false positive rates. Furthermore, up to 30 percent of cancers still present after having a ‘normal’ mammogram, known as interval cancers. In essence, traditional CAD uses outdated feature-engineered programming in a partially successful attempt to improve screening detection. Unfortunately it has ended up both being ill-trusted by radiologists because of weak specificity, and causing patients significant unwarranted anxiety by increasing unnecessary recalls.

Speak to any breast radiologist and they will often smirk when you mention breast CAD — some ignore it, others don’t even use it. Speak to any patient, and they will tell you that receiving a recall invitation for more tests is one of the most heart-wrenching experiences they have ever had. No-one has yet cracked the puzzle of how to provide effective and accurate CAD breast screening without unnecessarily increasing recall rates.

Driving pressures

There are separate factors other than accuracy behind recent interest in deep learning in breast screening and the drive for automation. The success of screening programmes has driven both demand and costs, with an estimated 39 million mammograms now being performed each year in the US alone. There are consequently not enough human radiologists to keep up with the workload. The situation is even more pressured in the UK where mandatory double-reading means that the number of radiologists per test is double that of the US. The shortage of expensive specialised breast radiologists is getting so acute that murmurs of reducing the benchmark of double-reading to single-reading in the UK are being uttered — this is despite convincing evidence that double-reading is simply better.

The cost-inefficiency of double-reading, however, is a tempting target for policy makers looking to trim expenditure, regardless of the fact that neither single reading nor single-reader CAD-supported programmes have been shown to be as accurate. At the coal-face, especially in an underfunded NHS, it feels like something needs to give before something breaks.

Convergence of opportunities

Through a quirk of fate, the very nature of breast screening programmes has provided a wealth of digitised imaging data extremely well documented with patient outcomes. This rich source of data just so happens to be perfect for the needs of deep learning. By leveraging labelled digital images and their associated final pathological outcomes, we can provide data scientists with a near-perfect ‘ground truth’ dataset. If an image has a suspected cancer on it, this is either confirmed or denied histopathologically by a biopsy or surgery. If there is no suspected cancer, then follow up imaging three years later will confirm this. By allowing neural networks to train on this ground truth data of ‘image plus pathology result’ or ‘image plus follow up image’, systems can teach themselves the characteristics required to recognise a cancer out of a sea of ‘normals’, rather than relying on humans to do the teaching for them, as was the case with previous generations of CAD. The hope is that, by using deep learning, we can benefit from levels of accuracy previously unseen in breast screening.

Getting it right in practice

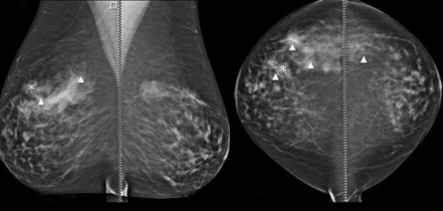

Where previous CAD systems aimed to mimic a radiologist’s thinking process by highlighting areas of known interest as demonstrated by experts, neural nets in fact do the opposite. They take the entire set of mammographic views (usually 4), and decide if there is a cancer or not using features they themselves have learnt. Interpreting the outputs from a neural net is therefore more difficult, as it may be interpreting features that humans aren’t even aware of. This isn’t as big a problem as it sounds — after all, radiologists have already almost unanimously rejected CAD overlays in the past as being too distracting. In fact, there is a growing sense that showing a radiologist where a possible abnormality is, or trying to classify it, may not be as useful as it sounds. Researchers in the field have suggested that future iterations of deep learning CAD systems do not give any localising information to radiologists at all, and instead simply allow them to review any images deemed suspicious by the system and marked for recall. This would prevent the risk of reducing controlled human thinking, and allow them to make the best informed decision more efficiently, instead of being distracted by trying to interpret outputs from a deep learning algorithm.

However, deep learning models can be trained to present their reasoning usefully in other ways. Saliency maps highlight which regions of the image most triggered the neural net’s final decision. This is not dissimilar to previous CADs, but can be made more useful by thresholding highlights to only appear given certain conditions and certainty of abnormality. Recall decision thresholds can be matched to a given radiologist’s performance, by changing the operating point on the ROC curve of the system. This would allow high recall radiologists to be paired with a low recall version of an algorithm, providing a more nuanced approach to CAD support. Additionally, comparative image search from a databank of mammograms can provide radiologists with similar images and known pathological outcomes to review. Finally, CAD can be set up so as to only make its recall suggestion known if it disagrees with a human reader, thereby acting only as a safety net, if desired.

How close are we?

It is not surprising to see a flurry of activity in this space across healthcare systems. The UK perhaps holds the highest quality data, as the NHS holds the world’s most robust national breast screening database with every mammogram result, biopsy and surgical outcome linked to every screening event.

I work as clinical lead at a London-based start-up, Kheiron Medical, which is focussed on deep learning in mammography. So far internal benchmark testing on algorithms trained on hundreds of thousands of mammograms has shown promising results, and we look forward to the outcomes of ongoing independent multi-site clinical trials.

Existing CAD providers are also reportedly working on deep learning solutions. Other start-ups include Lunit in Korea and Zebra Medical from Israel, and Google DeepMind has just entered the fray by partnering with Imperial College. On the global academic circuit there is productive research happening in Switzerland and Holland, and of course the United States. All of these researchers and companies are aiming to improve on previous iterations of CAD by supporting single reader detection while reducing recall rates using deep learning algorithms.

The next steps are clear — these algorithms need to go through strict medical device regulatory scrutiny to ensure they hold up against their claims. This will involve robust clinical trials both retrospectively to benchmark performance, and prospectively to ensure that algorithmic performance is maintained in a real world clinical setting.

The holy grail will be to enable single-reader programmes supported by accurate deep learning, potentially reducing the workload for the already overstretched double-reading radiologists. Deep learning in this context would categorically not replace single-reading radiologists at all, simply augment them by adding in an extra layer of pattern recognition done by machines, leaving the humans to abstract and decide. This technology could improve consistency and accuracy of existing single-reader programmes to the level of double-read programmes, as well as providing an immense new resource to countries yet to implement a screening programme at all.

The potential for deep learning-support in national screening, as well as for underdeveloped healthcare systems to leapfrog into the deep learning era, may be just one or two years away.

It’s only taken thirty two years so far…

If you are as excited as I am about the future of radiology artificial intelligence, and want to discuss these ideas, please do get in touch. I’m on Twitter @drhughharvey

If you enjoyed this article, it would really help if you hit recommend and shared it.

Dr Harvey is a board certified radiologist and clinical academic, trained in the NHS and Europe’s leading cancer research institute, the ICR, where he was twice awarded Science Writer of the Year. He has worked at Babylon Health, heading up the regulatory affairs team, gaining world-first CE marking for an AI-supported triage service, and is now a consultant radiologist, Royal College of Radiologists informatics committee member, and advisor to AI start-up companies, including Algomedica and Kheiron Medical.

Categories: Uncategorized

Very well-written and informative. Today digitization in healthcare and new innovative healthcare solutions have helped in achieving quality care and better patient outcomes. IT solutions help with an extremely well-documented patient history and also better patient outcomes.