This is a transcript of my HIMSS interview with Bevey Miner, EVP Healthcare Strategy & Policy at Consensus Cloud Solutions. Usually I’d show the video but in this case my fancy new microphone didn’t work so you’d only hear a one sided conversation. Luckily Youtube’s transcript somewhat came to the rescue–Matthew Holt

Matthew: Another THCB Spotlight, I am here with Bevey Minor who a year ago I interviewed as Consensus Cloud Solutions and now your sign says eFax. So, what the hell happened?

Bevey: Interesting question, Matthew. The company is Consensus Cloud Solutions. And the company’s always been Consensus Cloud Solutions since we spun off and went public ourselves. You’ll notice at our booth we’ve got the eFax brand — it’s eFax by Consensus Cloud Solutions. The reason we are showing up as eFax is because this year at HIMSS we really wanted to set the record straight: digital cloud faxing is not the problem with interoperability. Paper faxes are, but digital cloud faxing is not the problem.

The problem is all this unstructured data — all the unstructured data that happens with faxes, with scanned images, with TIFF images. All that unstructured data can’t be queried. It can’t be part of TEFCA. You can’t query what you can’t find.

Cloud faxing is send and receive all day long, and we do that very well and have been doing it for 27 years. About three years ago, we introduced an intelligent extraction solution. That solution doesn’t even have to start with the fax, but it allows the “find” piece to actually become the critical thing that we need to do. CMS defines interoperability as send, receive, find, and integrate. Fax technology handles send and receive all day long, but can’t find. So once we introduced a “find and intelligent extraction” solution, we can fire up TEFCA.

I’ve talked to a lot of regulators, including Dr. Thomas Keane and Amy Gleason with the CMS Align networks. You can’t ignore this pile of unstructured data and just assume the industry is going to go magically everything’s on FHIR. We’re all using FHIR because all of this stuff has really important patient information in it.

What we want to solve in the industry is: don’t say we have to axe the digital cloud fax. Let’s axe the paper fax machine. Digital cloud faxing isn’t going away — in fact, it’s growing, especially as we get rural health off of paper fax machines. The next level of maturity is digital cloud faxing. From there, once it’s digital, now you can do all sorts of things with it.

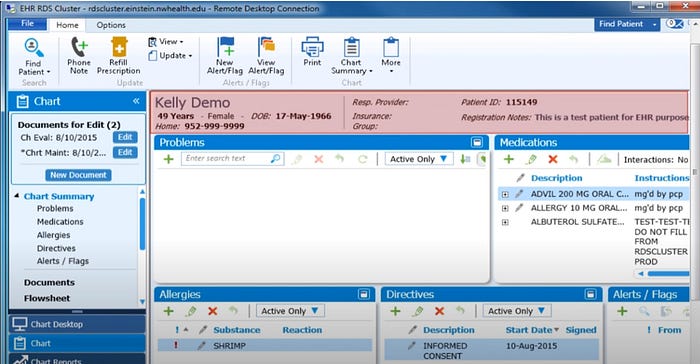

When we introduced electronic health records during meaningful use — I was at Allscripts at the time — our dream was that we would take this paper record and transform it into an electronic health record, so we could just get rid of the paper. Once we did that and there were discrete data elements in that EHR, we could do population health, clinical decision support, efficacy, all sorts of things — because there are discrete data elements now inside that electronic health record. That’s what a digital fax will do with the capability to do intelligence on top of it.

So we want to make the industry understand that the fax is not the problem. Extracting it and getting rid of all that unstructured data is the solution.

Continue reading…

Paul Black is CEO of

Paul Black is CEO of